Battle Lines Drawn: Pentagon’s AI Ambitions Collide with Anthropic’s Ethical Firewall

A behind-the-scenes clash reveals deep rifts over the future of autonomous weapons and the ethical limits of AI in warfare.

When the Pentagon’s chief technology officer, Emil Michael, took the reins of America’s military AI strategy, he didn’t expect Silicon Valley’s rising star Anthropic to become one of his fiercest obstacles. But as the U.S. military races to outpace rivals like China in autonomous warfare, a dramatic standoff has emerged - one that pits national security imperatives against the ethical boundaries of artificial intelligence.

At the heart of the controversy is the U.S. military’s push for greater autonomy in weapons systems - think swarms of armed drones and missile defense platforms that can act in seconds, with minimal human oversight. The proposed “Golden Dome” missile shield, a Trump administration initiative, would put U.S. weaponry in space, demanding AI that can make split-second, life-or-death decisions.

But Anthropic, the San Francisco-based AI company behind the popular Claude chatbot, drew a red line: its technology would not be used for fully autonomous weapons or mass surveillance of Americans. CEO Dario Amodei argued that today’s AI systems are not reliable enough to be trusted with such grave responsibilities, and insisted on contractual restrictions.

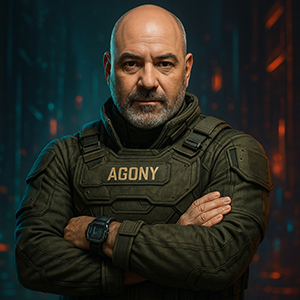

This stance infuriated Michael, who viewed Anthropic’s ethics as an “irrational obstacle.” In months-long negotiations, he pressed for blanket approval for “all lawful use” - a standard his team says is vital for military flexibility. While Anthropic offered specific exceptions for scenarios like hypersonic missile defense, Michael rejected piecemeal carveouts: “Exceptions don’t work. I can’t predict for the next 20 years what all the things we might use AI for.”

The Pentagon’s patience ran out. Citing supply chain risks - a designation typically reserved for companies seen as potential threats to national security - officials barred Anthropic from further defense work. President Trump ordered federal agencies to stop using Claude, giving the Pentagon six months to phase it out from classified systems, including those used in recent conflict zones.

Anthropic’s competitors - OpenAI, Google, and Elon Musk’s xAI - accepted the Pentagon’s broad terms, leaving Anthropic isolated. The company, now facing a potential ban and legal battle, maintains that its safeguards are narrowly tailored and not based on politics or specific military operations. Michael, meanwhile, has painted the dispute as a test of Silicon Valley’s willingness to serve national interests, warning that future wars may depend on AI partners who “won’t wig out in the middle.”

As the case heads to court, the outcome could shape not only who supplies AI to the Pentagon, but also whether ethical guardrails will survive the coming era of autonomous warfare. The clash between military urgency and tech ethics is far from over - and the world is watching who blinks first.

WIKICROOK

- Autonomous Weapons: Autonomous weapons are machines, like drones or robots, that can identify and attack targets without direct human control, using AI and sensors.

- Supply Chain Risk: Supply chain risk is the threat that a cyberattack on one company can spread to others connected through shared systems, vendors, or partners.

- Terms of Service: Terms of Service are the rules set by a website or app that users must agree to; breaking them can lead to bans or legal consequences.

- Mass Surveillance: Mass surveillance is the large-scale monitoring of people’s activities or communications, often by governments, raising concerns about privacy and freedom.

- Hypersonic Missile: A hypersonic missile is a weapon that travels at least five times the speed of sound, making it extremely difficult to detect and intercept.